6 Software Testing

Testing plays a pivotal role in guaranteeing the reliability and stability of software systems. Beyond merely detecting bugs, it represents a strategic investment in the quality and long-term maintainability of your codebase. By rigorously testing software, you safeguard against unforeseen complications arising from code modifications. For research software, systematic testing can ensure the reliability, accuracy, and scientific validity of the software, thereby enhancing the credibility and reproducibility of research findings.

For a solid introduction and motivation on writing tests, you might want to explore

- the Turing Way: Chapter on code testing

- Carpentries Intermediate Research Software Development: Testing at scale

- the Code Refinery: Lesson on testing

6.1 Introduction to writing tests

6.1.1 What to test?

In designing test cases for research software, it can be useful to conceptually differentiate between tests that verify the technical correctness of the code and tests that check the scientific validity of the results. With technical software tests, you can for example check whether a function behaves correctly for multiple input data types and produces errors and exceptions accordingly. With a scientific test, you could compare the outcome of a function to known experimental results.

The following questions can help you decide what to test in your software:

- How can I ensure the algorithms and mathematical models implemented in the software are correct?

- How can I verify that the input data types, formats, and ranges adhere to expected standards and constraints?

- How does the software behave at boundary conditions and extreme values of input parameters?

- How does the software perform under varying workloads and dataset sizes, and is it scalable for large-scale simulations or data processing tasks?

- How do I compare the software’s results against existing literature, experimental data, or validated simulations to validate their accuracy?

6.1.2 Types of tests

In writing tests for research software, we can differentiate between four types of tests: unit tests, integration tests, regression tests, and end-to-end tests.

Unit tests

A unit test is a type of test where individual units or components of the software application are tested in isolation from the rest of the application. A unit can be a function, method, or class. The main purpose of unit testing is to validate that each unit of the software performs as designed.

Integration tests

Integration testing is a level of software testing where individual units are combined and tested as a group. The purpose is to verify that the units work together as expected and that the interfaces between them function correctly. Integration tests aims to expose defects in the interactions between integrated components.

Regression tests

Regression testing aims to verify that recent code changes haven’t adversely affected existing features or functionality. It involves re-running previously executed test cases to ensure that the software still behaves as expected after modifications. The primary purpose of regression testing is to catch unintended side effects of code changes and ensure that new features or bug fixes haven’t introduced regressions or broken existing functionality elsewhere in the code. Regression tests can include both unit tests and integration tests, as well as higher-level tests such as system tests. They are a good place to test the scientific validity of the software.

End-to-end tests

End-to-end testing is focused on validating the entire system from start to finish, simulating real use cases. The goal is to verify the software functions as a whole from the user’s perspective.

6.2 Getting started with testing

6.2.1 Designing a test case

For more complex integration, regression, or end-to-end tests, it can be useful to first describe the test case in words.

- Description: Description of test case

- Preconditions: Conditions that need to be met before executing the test case

- Test Steps: To execute test cases, you need to perform some actions. Mention all the test steps in detail and the order of execution

- Test Data: If required, list data that needed to execute the test cases

- Expected Result: The result we expect once the test cases are executed

- Postcondition: Conditions that need to be achieved when the test case was successfully executed

6.2.2 Testing strategy

- Learn the basics of testing for your programming language.

- Choose and setup a testing framework.

- Practice with writing unit tests for small functions or methods.

- Identify critical parts of your software that requires testing and write tests to verify its proper technical and scientific functioning.

- Incrementally add tests. Whenever you add or change a function, try to write a test to cover the code.

- Choose descriptive and meaningful names for your test files and functions that clearly indicate what aspect of the code they are testing. For example, tests for the function

draw_random_number()should be contained in the filetest_draw_random_number.py. This file will then contain all tests for this function. - Large functions are difficult to test. Aim to write modular code consisting of small functions.

- Ensure that each test case is independent and does not rely on the state of other tests or external factors.

- Limit the number of assert statements per test. The execution of a test function is terminated after an assert statement fails.

- Aim for comprehensive test coverage to ensure that critical parts of your codebase are thoroughly tested. A good benchmark is to test at least 70% of your code base with unit tests.

- Design tests to run quickly to encourage frequent execution during development and continuous integration.

- Store your tests in a separate folder, either in the root of your repository called

tests/or insrc/tests/.

6.3 Testing in Python

In Python, two popular frameworks are used: pytest and unittest. In this guide, we demonstrate the use of pytest. When starting out with testing in Python, it is recommended to use pytest.

The main difference between the two frameworks is that pytest offers a more user-friendly and less verbose syntax, allowing for simpler test writing and better readability. unittest is part of the Python standard library and follows a more traditional object-oriented style of writing tests.

Step 1. Setup a testing framework

Install pytest using pip

pip install pytestSetup your test framework with the following structure in your repository:

src/

mypkg/

__init__.py

add.py

draw_random_number.py

tests/

test_add.py

test_draw_random_number.py

...Step 2. Identify testable units

Identify functions, methods, or classes that need to be tested within the Python codebase.

Step 3. Write test cases

Write test functions using the pytest framework to test the identified units. For example:

# src/mypkg/add.py

def add(x,y):

return x + y# tests/test_add.py

from mypkg import add

def test_add():

assert add(1, 2) == 3

assert add(0, 0) == 0

assert add(-1, -1) == -2Limit the number of assert statements in a single test function. Otherwise, when an assert fails, pytest will not test the remaining assertions in the test function.

Step 4. Run tests locally

Run the test suite locally using the pytest command to ensure it executes correctly.

pytest test_add.pyPytest will automatically discover all files that are prepended with test_. To run all tests, execute pytest without any arguments.

Step 5. Interpret and fix tests

Interpret the test results displayed in the console to identify any failures or errors. If errors occur, debug the failing tests by examining failure messages and stack traces.

Step 6. Run coverage report locally

Generate a coverage report to gain insights into which parts of the codebase have been executed during testing (see Code Coverage).

Step 7. Run tests remotely

Integrate the test suite with a Continuous Integration service (e.g., GitHub Actions) to automate testing.

Examples of repositories with tests

- eScience Center - Project

matchms - Pandas library - Repository tests

- Pytest - Getting Started

- Code Refinery - Pytest exercise

- RealPython - Effective testing with pytest

6.4 Testing in MATLAB

MATLAB supports script-based, function-based, and class-based unit tests, allowing for a range of testing strategies from simple to advanced use cases. See the MATLAB documentation for more information:

Because of the limiting features of the script- and function-based testing, this guide will discuss class-based testing. Class-based tests give you access to shared test fixtures, test parameterization, and grouping tests into categories.

6.4.1 Writing tests in MATLAB

🎥 Check out this short MATLAB video on writing class-based tests.

The naming convention for writing a test for a particular MATLAB script is to prefix “test_” to the name of the script that is being tested. For example, a test for the file draw_random_number.m should be called test_draw_random_number.m. In general, MATLAB will recognize any scripts that are prefixed or suffixed with the string test as tests.

💡 Check out the MATLAB documentation: Write Simple Test Case Using Classes

Additionally, here is a template with an explanation for writing of a class-based unit test in MATLAB for the file sumNumbers.m:

% Test classes are created by inheriting (< symbol) the Matlab Testing

% framework.

%

% e.g. classdef nameOfTest < matlab.unittest.TestCase

% end

classdef (TestTags = {'Unit'}) test_example < matlab.unittest.TestCase

% It's convention to name the test file

% test_"filename being tested".m

%

% TestTags are an optional feature that are useful for identifying

% what kind of test you're coding, as you might only want to run

% certain tests that are related.

properties

% Class properties are not required, but are useful to contain

% common parameters between tests.

end

methods (TestClassSetup)

% TestClassSetup methods are not required, but are usually used to

% setup common testing variables, or loading data. These methods

% are executed *prior to* the (Test) methods.

end

methods (TestClassTeardown)

% TestClassTeardown methods are not required, but are useful to

% delete any files created during the test execution. These methods

% are executed *after* the (Test) methods.

end

methods (Test) % Each test is it's own method function, and takes

% testCase as their only argument.

function test_sumNumbers_returns_expected_value_for_integer_case(testCase)

% Use very descriptive test method names - this helps for debugging

% when error occurs.

% Call the function you'd like to test, e.g:

% actualValue = sumNumbers(2,2); % Test example integer case, 2+2

% Since the function sumNumbers is not defined in this example, the test will

% fail. Instead, we will define the actual value.

actualValue = 4;

expectedValue = 4; % We know that we expect that 2+2 = 4

testCase.assertEqual(expectedValue, actualValue)

% Assert functions are the core of unit tests; if it fails,

% test log will return failed tests and details.

%

% They are called as methods of the testCase object.

%

% Example assert methods:

%

% assertEqual(expected, actual): Passes if the two input values

% are equal.

% assertTrue(boolValue): Passes if the value or statement is

% true (e.g. 2>1)

% assertFalse(boolValue): Passes if the value or statement is

% false (e.g. 1==0)

%

% See Matlab's documentation for more assert methods:

% https://www.mathworks.com/help/matlab/ref/matlab.unittest.qualifications.assertable-class.html

end

end

end6.4.2 Executing tests in MATLAB

MATLAB offers four ways to run tests.

1. Script editor

When you open a function-based test file in the MATLAB® Editor or Live Editor, or when you open a class-based test file in the Editor, you can interactively run all tests in the file or run the test at your cursor location.

2. runtests() in Command Window

You can run tests through the MATLAB Command Window, by executing the following command in the root of your repository:

results = runtests(pwd, "IncludeSubfolders", true);MATLAB will automatically find all tests. If you make use of tags to categorize tests, you can run specific tags with:

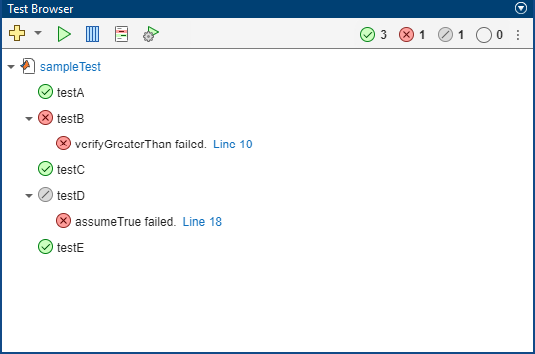

results = runtests(pwd, "IncludeSubfolders", true, "Tag", '<tag-name>');3. MATLAB Test Browser App

The Test Browser app enables you to run script-based, function-based, and class-based tests interactively. The app is available since R2023a.

4. MATLAB Test

MATLAB Test provides tools for developing, executing, measuring, and managing dynamic tests of MATLAB code, including deployed applications and user-authored toolboxes.

6.4.3 MATLAB Simulink

In addition to script, function, and class-based unit tests, MATLAB offers Simulink Test for comprehensive simulation-based testing for Simulink.

- Simulink Test - Introduction video

- Simulink Test - Examples

6.5 Useful testing concepts

Code Coverage

A code coverage report is a tool to measure the effectiveness of testing by providing insights into which parts of the codebase have been executed during testing.

- MATLAB - Collect code coverage with Command Window execution (since R2023b)

- MATLAB - Code coverage with Test Browser (since R2023a)

- MATLAB - Collect code coverage

- Pytest - pytest-cov

- Python coverage - Documentation

Error handling

It is not only useful to test that your code generates the expected behaviour for the appropriate inputs, it is also useful to check that your functions throw the correct exceptions when this is not the case.

- MATLAB - Verify function throws specific exceptions

- Pytest - Assert raised exceptions

Fixtures

Fixtures are predefined states or sets of data used to set up the testing environment, ensuring consistent conditions for tests to run reliably.

- MATLAB - Create shared fixtures

- Pytest - How to use fixtures

Parameterization

Parameterization involves running the same test with different inputs or configurations to ensure broader coverage and identify potential edge cases.

- MATLAB - Create a basic parameterized test

- Pytest - Parameterizing unit tests

Mocking

Mocking is a technique used to simulate the behavior of dependencies or external systems during testing, allowing isolated testing of specific components. For example, if your software requires a connection to a database, you can mock this interaction during testing.

- MATLAB - Create Mock Object

- Pytest - How to monkeypatch/mock modules and environments

The term monkey patch seems to have come from an earlier term, guerrilla patch, which referred to changing code sneakily – and possibly incompatibly with other such patches – at runtime. The word guerrilla, nearly homophonous with gorilla, became monkey, possibly to make the patch sound less intimidating.

Specific library tests

In Python, some libraries come with their own specific test assertions, often compatible with pytest. For example, numpy includes a set of assertions for testing a numpy.ndarray. For more information, check out Numpy Test Support.